We all know what a good online tutor is like. Of course we do. She’s friendly, approachable and… errr… nice… Yes, quite. It all starts to get a little hazy. How do we judge exactly if someone is a ‘good’ (or not) online tutor or facilitator, apart from our own subjective impressions? What of organisations that employ hundreds of online tutors – how to ensure that everybody is up to scratch? How to ensure accountability? In a nutshell, how to evaluate online tutors?

We all know what a good online tutor is like. Of course we do. She’s friendly, approachable and… errr… nice… Yes, quite. It all starts to get a little hazy. How do we judge exactly if someone is a ‘good’ (or not) online tutor or facilitator, apart from our own subjective impressions? What of organisations that employ hundreds of online tutors – how to ensure that everybody is up to scratch? How to ensure accountability? In a nutshell, how to evaluate online tutors?

Traditionally, teaching skills (both f2f and online) have been evaluated with the help of checklists. Nothing wrong with a nice checklist, and there is one for K12 online tutors here. But how does this translate into helping online tutors develop? In other words, how can we help an online tutor whose checklist has a long list of negatives develop as a professional?

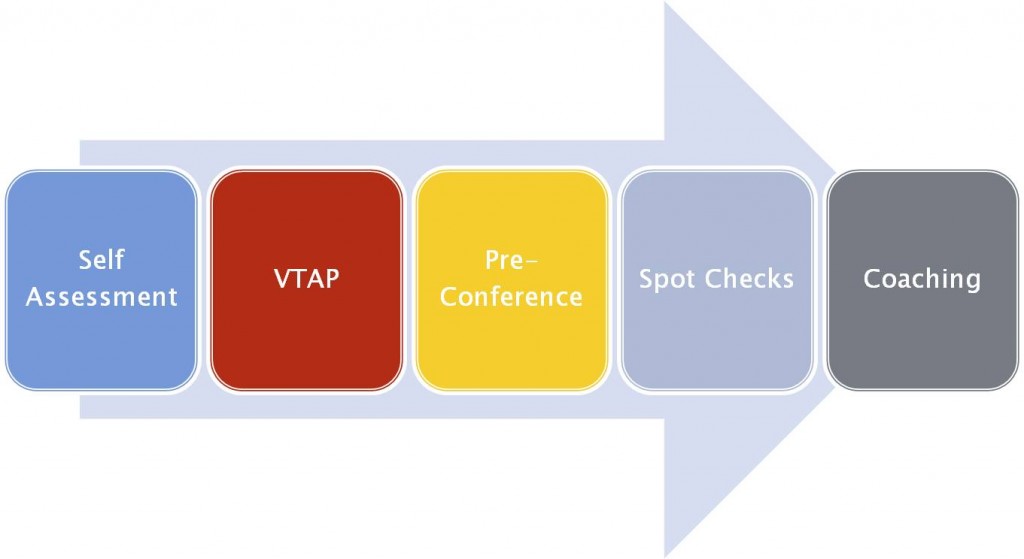

I recently attended an EdWeek webinar in which this topic was explored. Bryan Setser of the North Carolina Virtual Public School outlined a five-stage developmental program for evaluation that his organisation implements:

Bryan Setser’s PowerPoint is here .

Briefly, each of these five stages works as follows:

1 Teacher self assessment

Online tutors are given a grid with detailed descriptors to evaluate their own performance. They evaluate themselves for each descriptor.

2 Virtual teaching action plan

Areas identified as needing work in the self-assessment stage are prioritised and structured so as to form an action plan for ongoing professional development. This action plan includes personal goals, institutional / school goals, as well as goals for students.

3 Pre-conference

This consists of a synchronous meeting in a video conferencing platform with coaches/mentors to share the results of the first two stages above, and to clarify and focus the action plan.

4 Spot checks

Mentors/supervisors check tutor performance in the VLE occasionally e.g. by looking at the contact log, course statistics, the gradebook (is it up to date?), the quality of feedback and communication with students…

5 Coaching

The areas identified through the four stages above are used for formative evaluation and just-in-time professional development.

AND/OR

5 Evaluation

A stoplight system for summative evaluation is used :

- red=danger, tutor not competent

- yellow = tutor needs help with x, y, z

- green=tutor doing well

Depending on the results of this, the cycle of stages 1 to 5 may start again. (Or apparently, the tutor may get kicked out of the school if several cycles have already taken place with no improvement)

I found this an interesting approach to online tutor evaluation, with its emphasis on (structured and supported) development rather than assessment. What I especially like is:

- The programme starts with self-assessment, so tutors are encouraged to set their own agendas for professional development.

- Tutors can work on their action plans with colleagues

- The action plans are goal oriented

- Support is offered in the form of mentors/coaches

- A record of each stage is kept by tutors (self-evaluation, action plans, and recordings of the preconference stage)

- The stoplight evaluation (which was new to me) is pretty darn clear!

It seems that although most of this could also be applied to f2f teaching, the online medium does have advantages at certain points. The pre-conference if held face-to-face is unlikely to be recorded. The spot checks could be very disruptive and threatening if they involved bursting into teachers classrooms unannounced! Even announced visits f2f are scary.

So, if you’re an online tutor, or work with teams of online tutors – how do you carry out professional development/evaluation? Do you carry out evaluation at all? What you think of this program? Can you see a use for it in your context? I’d love to hear your thoughts in the Comments section below 🙂

Nicky Hockly

The Consultants-E

September 2010

Thanks for this post. It’s a great collection of quality assurance measures which are of use to anyone operating in this field.

One thing that I feel is missing is a transparency factor. By that I mean most of these measures can be internally evaluated, but it is very hard (impossible?) for a potential consumer to check them. If you work with big companies they will often demand to look very deeply at your processes and quality control and I feel strongly that all consumers should have this right. References are mostly useless as they are obviously carefully selected. Samples are often miniscule to non-existent.

Have you any suggestions as to how consumers can assure themselves that an operator is up to scratch? Having better-informed consumers would benefit the industry as a whole.

Hi Olaf, and thanks for your bringing up this issue. I do indeed have some suggestions for consumers to check the ‘quality’ of an online course, which I’ve written about in both How to Teach English with Technology (2007) and again more recently in Teaching Online (2010). Yes, twice – I agree it’s an important area!

Although punters are unlikely ever to be allowed access to internal assessments foronline tutors, there are definitely a number of questions that they should ask, not only about tutors, but about any online course or organisation as a whole.

Apart from accreditation and validation issues, online courses need to demonstrate current best practice in the field of online learning. I also personally believe that, for training or development to be effective it needs to be linked to practice, and involve some level of reflection on that practice.

Here are some of the questions we suggest in our writing:

• Course materials: What materials are used in the course? Is a range of media used (text, audio, video…)? Are a range of online tools used to deliver this content, and to encourage reflection (online forums, wiki, blog, polls, quizzes and questionnaires, text and audio chat, video-conferencing…)? Is a range of task types used – not just the old ‘read and reflect’ approach? Do tasks cater to variety of learner styles? How?

• Tutors: Apart from having the necessary subject expertise, how experienced in e-learning are the course tutors? What demonstrable skills, training and experience do they have in this field? How much support can you expect from them, and what form will it take? How quickly will they answer queries and provide support? How much feedback and guidance on course work or on progress is provided, and how? What is the tutor to participant ratio?

Questions about course design, course delivery mode, assessment, feedback and certification are also advisable.

Hope this helps!

Nicky

Hi Nicky

Thanks for that – very useful for somebody who is just starting in the field. I’d like to have a closer look at the performance descriptors used in the stage of self-assessment. Any pointers where the full rubric can be found on the web? Cheers.

Hi Ania,

Thanks for dropping by! Bryan Setser’s presentation (linked to in my post above) has some of the descriptors on slide 3 – teachers assess themselves on a scale of 0-3, although there is no link to the source.

The iNACOL site has an interesting research section as well:http://www.inacol.org/research/ with links to further sites worth exploring…

Thanks Nicky! Yes, it’s a shame that Bryan Setser’s slide doesn’t include full info but thanks for the other link to explore.